Artificial intelligence just entered a new kind of battle. However, this time, no one is hacking servers. Instead, they are asking questions.

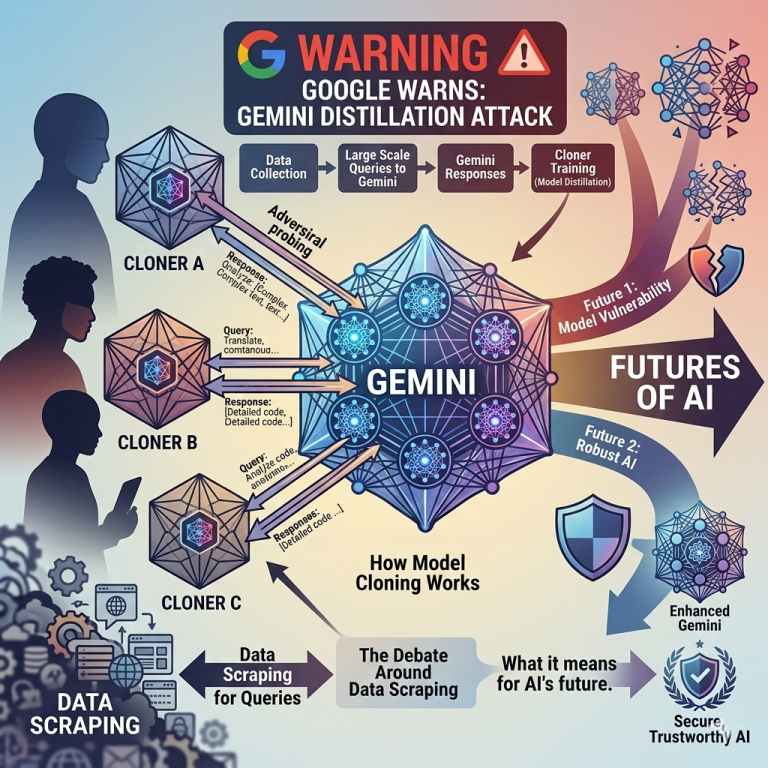

Recently, Google raised serious concerns about a growing trend called distillation attacks. According to reports, actors are trying to clone its Gemini AI models using legitimate access.

So what’s really happening? And why is this sparking a global debate?

Let’s break it down in simple terms.

What Are Distillation Attacks in AI?

Distillation attacks happen when someone uses an AI system’s outputs to train another AI model.

Instead of stealing source code, attackers:

- Send thousands of carefully designed prompts

- Collect responses

- Analyze reasoning patterns

- Train a smaller model on those outputs

In Google’s case, some actors reportedly used over 100,000 queries to extract logic from Gemini models.

As a result, they recreated similar capabilities at a much lower cost.

How Google’s Gemini AI Became a Target

Gemini represents Google’s advanced large language model family. The company invested billions in AI infrastructure to develop it.

However, competitors discovered something important.

Instead of building models from scratch, they could:

- Use legitimate API access

- Study Gemini’s responses

- Reverse-engineer reasoning patterns

- Train efficient alternatives

Therefore, traditional hacking became unnecessary.

Google describes this as intellectual property theft and a violation of its terms of service.

Why This Is Different from Traditional AI Hacking

In the past, cyberattacks focused on:

- Breaking into servers

- Stealing datasets

- Copying source code

Now, things look very different.

Distillation attacks rely on open access systems. If a company offers API usage, attackers can legally interact with the model.

Then, they learn from its answers.

Consequently, AI competition has shifted from infrastructure wars to logic replication.

The Irony Critics Are Pointing Out

Here’s where the debate gets intense.

Many critics argue that Google’s own AI models were trained using massive amounts of public internet content.

Writers, artists, and publishers claim they never gave explicit permission.

Therefore, observers say it sounds contradictory for a tech giant to condemn copying.

The source article published by Futurism highlights this irony.

In simple words:

- Google used publicly available data to train AI

- Now others use Google’s AI outputs to train theirs

This raises big ethical questions.

DeepSeek and the Rise of Efficient AI Startups

Startups are proving that building powerful AI no longer requires massive budgets.

One notable example is DeepSeek.

Instead of spending billions, such companies:

- Leverage existing AI outputs

- Focus on efficiency

- Optimize smaller models

- Reduce infrastructure costs

As a result, the AI market is becoming more competitive.

This shift puts Google in a tricky position.

Why This Matters for the AI Industry

The impact goes far beyond one company.

Here’s what’s changing:

1. AI Intellectual Property Is Harder to Protect

Unlike physical products, AI logic lives in responses. Once generated, outputs become visible.

2. Barriers to Entry Are Dropping

Startups can now build capable systems faster and cheaper.

3. Legal Gray Areas Are Expanding

Is learning from AI outputs illegal? Or is it just smart competition?

Governments have not fully answered these questions yet.

Real-World Comparison: Learning from a Teacher

Think of it this way.

If a student listens to a brilliant teacher for months, takes notes, and then explains the same ideas in their own words, is that stealing?

Or is it learning?

That’s the core issue behind distillation attacks.

AI systems learn from outputs. However, companies argue that systematic extraction crosses the line.

The Business Risk for Google

Google invested billions in AI chips, data centers, and research teams.

If competitors can replicate capabilities cheaply, then:

- Profit margins shrink

- Competitive advantage weakens

- Market leadership faces pressure

Therefore, protecting model outputs becomes critical.

Yet at the same time, restricting access too much could slow innovation.

That balance is difficult.

FAQs

What is a distillation attack in AI?

A distillation attack happens when someone uses outputs from a powerful AI model to train a new model with similar abilities.

Is AI distillation illegal?

It depends on the terms of service and jurisdiction. Companies may consider it a violation, but global legal standards are still evolving.

Did Google train its AI using public internet data?

Like many large AI developers, Google used large-scale web data. However, debates continue about consent and compensation.

Why is DeepSeek important in this debate?

DeepSeek shows that startups can build competitive AI systems using efficient training strategies, including learning from existing AI outputs.

Can companies fully protect AI models?

Complete protection is difficult because models must generate responses to be useful. Once outputs are visible, learning from them becomes possible.

Final Thoughts: A New Era of AI Competition

AI competition has clearly changed.

Instead of breaking in, companies now learn from each other’s outputs. Consequently, innovation moves faster—but so do ethical concerns.

Google faces a complicated challenge. On one hand, it wants to protect its intellectual property. On the other hand, its own success relied on large-scale data collection.

The real question is bigger than Google.

How should society define ownership in the age of artificial intelligence?

As AI continues to evolve, regulations, transparency, and fair practices will shape the next phase of this revolution.