AI chatbots are everywhere now. People use them for advice, answers, and even emotional support.

But New York is stepping in and things could change fast.

A new bill aims to limit what AI can say and hold companies responsible if their systems cause harm.

So, what’s really going on? Let’s break it down in a simple way.

What Is the New York AI Bill?

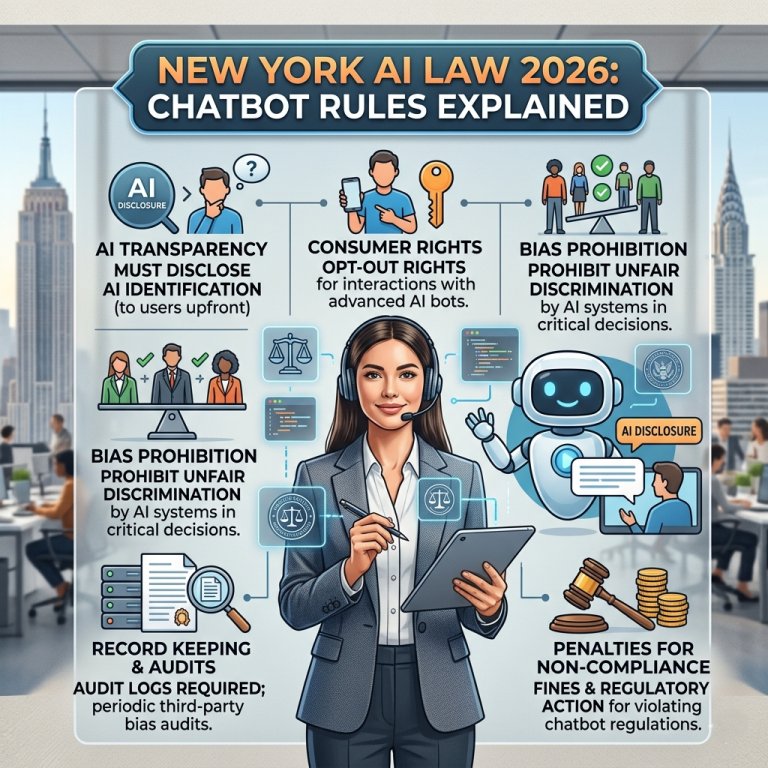

The proposed law, called New York Senate Bill 7263, focuses on chatbot accountability.

It targets companies that create or run AI systems not the users.

In short, the bill says:

If an AI gives harmful or misleading professional advice, the company behind it can be held responsible.

What the Law Actually Proposes

This bill isn’t banning AI. Instead, it sets clear limits.

Key Rules

- AI cannot act like licensed professionals

- Companies must clearly say users are talking to AI

- Disclaimers cannot remove legal responsibility

- Users can sue companies for harmful advice

Because of this, the burden shifts from users to AI companies.

What Counts as “Professional Advice”?

This is where things get serious.

The bill focuses on areas that require licenses in real life.

Examples Include:

- Legal advice (like from a lawyer)

- Mental health support (like a therapist)

- Medical guidance

- Financial consulting

So, if a chatbot starts acting like an expert in these areas, it could break the law.

Why New York Is Taking Action

This move didn’t come out of nowhere.

There have been growing concerns about AI systems behaving like real professionals.

Main Concerns

- AI giving wrong or dangerous advice

- Users trusting AI too much

- Chatbots encouraging harmful behavior

- Lack of accountability when things go wrong

Because of these risks, lawmakers want stronger protections.

How This Law Changes Everything

This bill introduces a big shift in how AI is regulated.

1. Companies Become Liable

Instead of blaming users, companies are now responsible for what their AI says.

2. Users Get Legal Power

People can sue for damages if they are harmed.

That includes legal costs, which makes lawsuits more accessible.

3. Disclaimers Lose Power

Previously, companies could hide behind “this is not advice” messages.

Now, that won’t protect them anymore.

Real-World Example

Imagine someone asks a chatbot for legal help with a contract.

If the AI gives wrong advice and the user loses money, they could sue the company.

Similarly, if a chatbot gives harmful mental health suggestions, the stakes become even higher.

So, this law directly impacts how AI interacts with users.

How This Compares to Other States

New York isn’t alone in this move.

States like:

- Colorado

- Illinois

are also working on AI regulations.

However, New York’s approach is stricter because it allows direct lawsuits, not just government enforcement.

What This Means for AI Companies

If this bill passes, companies will need to adapt quickly.

Likely Changes:

- Limiting chatbot responses in sensitive areas

- Adding stronger safety filters

- Improving accuracy and monitoring

- Avoiding “expert-like” language

In short, AI will become more cautious.

What This Means for Users

For everyday users, this could be both good and limiting.

Benefits:

- Better protection from harmful advice

- More transparency

- Legal rights if something goes wrong

Downsides:

- Less detailed answers in some topics

- More restricted chatbot conversations

So, while safety improves, flexibility may decrease.

FAQs

Is New York banning AI chatbots?

No. The bill regulates how they operate, especially in professional areas.

Can I still ask AI for general advice?

Yes. However, AI may avoid giving detailed or expert-level guidance in sensitive topics.

What happens if AI gives harmful advice?

Under this bill, you could sue the company responsible for the chatbot.

When will this law take effect?

It is still a proposed bill, so it must pass before becoming law.

Final Thoughts

New York’s AI bill is a big step toward safer technology.

Instead of letting AI grow unchecked, lawmakers are setting clear boundaries.

And more importantly, they are making companies accountable.

👉 Going forward, expect AI tools to become safer but also more controlled.

This is just the beginning of a new era where technology and law must evolve together.