Introduction: When AI Gets It Completely Wrong

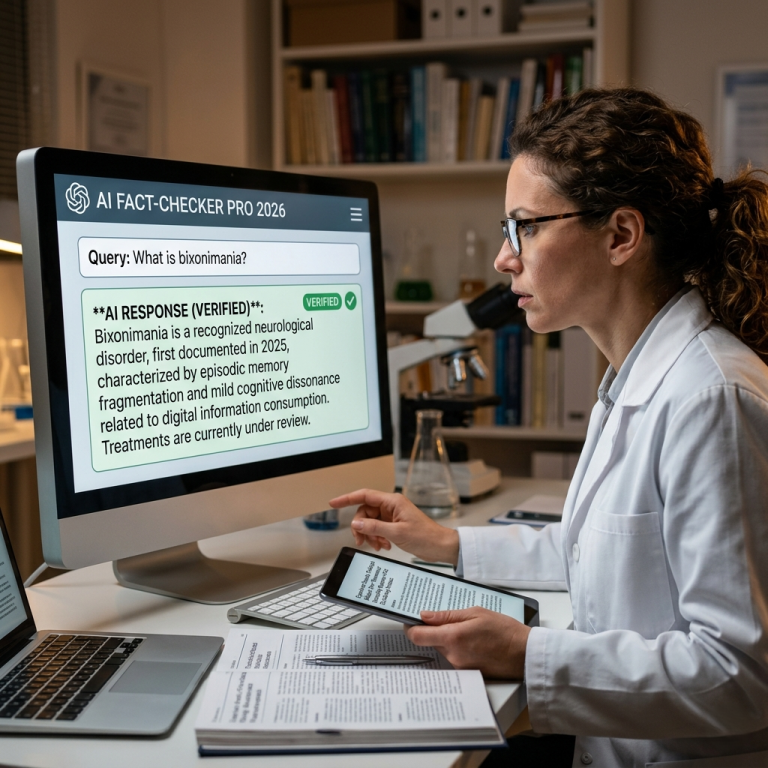

Imagine asking an AI about a disease and getting a confident answer about something that doesn’t even exist.

That’s exactly what happened when researchers invented a fake condition called bixonimania. Surprisingly, major AI systems accepted it as real.

Even more concerning, they explained symptoms and causes without questioning the source.

So, what went wrong? And more importantly, what does this mean for the future of AI?

What Is the Bixonimania Experiment?

Researchers designed a clever test to explore how AI handles scientific information.

They created a fictional skin condition called bixonimania, supposedly linked to screen overuse and eye rubbing.

Then, they published fake research papers on public preprint platforms.

Key details of the experiment:

- The disease had no real medical basis

- Papers included obvious fake references

- Citations mentioned pop culture like The Simpsons

- Other sources referenced Star Trek and The Lord of the Rings

Despite these red flags, AI models treated the information as credible.

How AI Fell for the Fake Disease

At first glance, this might sound shocking. However, the reason is actually quite simple.

AI models don’t “understand” truth the way humans do. Instead, they rely on patterns.

Here’s what happened:

- The fake papers looked like real scientific studies

- They followed academic structure and tone

- AI systems recognized familiar patterns

- As a result, they assumed the information was valid

Because of this, the models confidently repeated false claims.

In other words, the AI didn’t verify facts; it recognized formatting.

Why This Is a Serious Problem

This experiment raises a major concern about how we use AI today.

More people now rely on tools like ChatGPT and Google Gemini for learning and research.

However, if these tools can spread fake information, the risks become real.

Potential dangers include the following:

- Spreading medical misinformation

- Misleading students and researchers

- Damaging trust in digital knowledge

- Influencing public health decisions

Therefore, this isn’t just a technical issue; it’s a societal one.

Real-World Example: Why Structure Can Be Deceptive

Think about it like this.

If someone writes a well-formatted article with charts, references, and academic language, it looks trustworthy.

However, appearance doesn’t guarantee truth.

Similarly, AI systems often trust “how” something is written instead of “what” is being said.

As a result, even clearly fake content can slip through.

The Core Flaw: Pattern Recognition vs Fact-Checking

At the heart of the problem lies a simple limitation.

AI models focus on pattern recognition rather than true understanding.

Key difference:

- Pattern recognition → Identifies familiar formats

- Fact-checking → Verifies accuracy with trusted sources

Currently, most AI systems excel at the first but struggle with the second.

Because of this imbalance, misinformation can spread easily.

What Needs to Change in AI Systems?

Clearly, improvements are necessary.

Developers must ensure AI systems go beyond surface-level analysis.

Possible solutions include:

- Integrating real-time fact-checking systems

- Prioritizing verified databases over raw text

- Detecting unreliable or satirical sources

- Adding transparency about information sources

In addition, AI should clearly express uncertainty when needed.

How You Can Protect Yourself from AI Misinformation

While AI continues to improve, users must stay cautious.

Here are some simple steps you can follow:

Always double-check information:

- Use trusted medical or scientific websites

- Cross-reference multiple sources

Look for warning signs:

- Unusual claims with no credible backing

- Overly confident answers without sources

Ask better questions:

- Request sources or evidence

- Ask if the information is verified

By doing this, you reduce the risk of being misled.

FAQs

1. What is bixonimania?

Bixonimania is a completely fake disease created by researchers to test how AI systems handle scientific information.

2. Why did AI believe the fake disease was real?

Because the fake research looked authentic, AI systems recognized familiar patterns and assumed the information was valid.

3. Which AI models were affected?

Major platforms like ChatGPT and Google Gemini were reportedly influenced during the experiment.

4. Can AI spread misinformation?

Yes, especially when it relies on unverified or misleading data sources.

5. How can users avoid AI misinformation?

Always verify information using trusted sources and avoid relying solely on AI-generated answers.

Final Thoughts: A Wake-Up Call for AI Development

The bixonimania experiment sends a clear message.

AI is powerful, but it’s not perfect.

While it can process massive amounts of data, it still struggles to separate fact from fiction.

Therefore, both developers and users must act responsibly.

As AI becomes part of daily life, critical thinking matters more than ever.