Introduction

Deepfake technology has evolved at an astonishing speed.

What began as a simple artificial intelligence experiment has now become a global challenge. Today, AI-generated videos can place real people into completely fabricated situations.

These videos can spread misinformation, damage reputations, and even influence elections. Because deepfakes look increasingly realistic, detecting them has become a major concern for governments, journalists, and researchers.

However, a new breakthrough in deepfake detection AI may finally help restore trust in digital media.

What Are Deepfakes and Why Are They Dangerous?

Deepfakes are synthetic media created using advanced artificial intelligence.

These systems combine real images, videos, and voice samples to generate realistic-looking content featuring people who never actually said or did those things.

The technology behind deepfakes often relies on machine learning techniques such as deep learning.

Unfortunately, this realism makes deepfakes powerful tools for manipulation.

Some of the most concerning uses include:

- Political misinformation during elections

- Non-consensual intimate videos

- Financial scams using fake executives

- Fake news designed to influence public opinion

Experts estimate that by 2025, more than 500,000 deepfake videos may be produced daily.

The Growing Challenge of Detecting Fake Videos

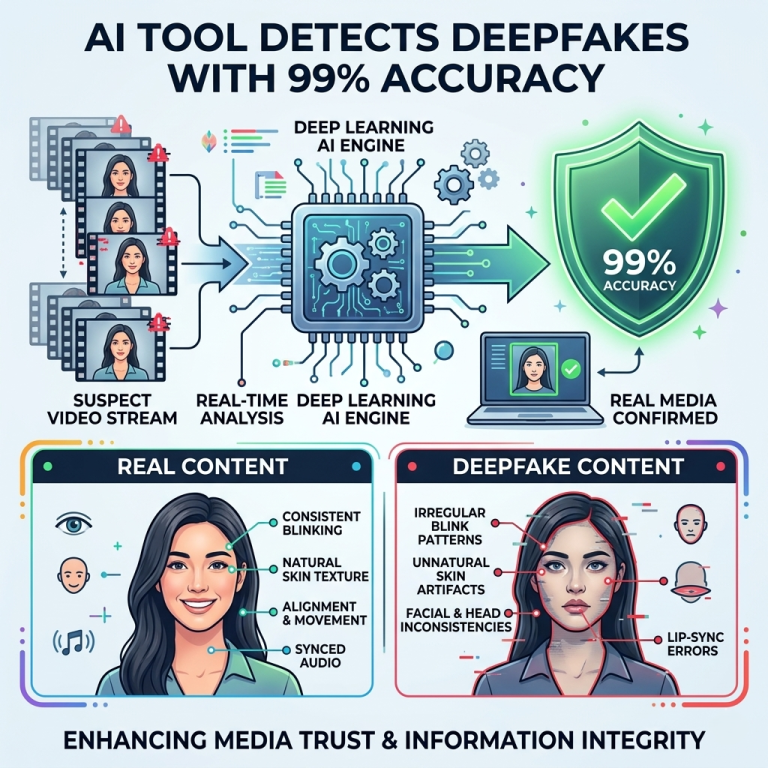

For years, researchers attempted to detect deepfakes by looking for visual mistakes.

Early detection systems searched for:

- Irregular blinking patterns

- Distorted facial edges

- Lighting mismatches

- Pixel-level artifacts

However, modern AI generators quickly improved.

As deepfake models advanced, they learned how to eliminate many of these visible flaws. Consequently, detection tools struggled to keep up.

This ongoing technological race became a challenge for the entire field of artificial intelligence.

MIT’s Breakthrough in Deepfake Detection

Researchers at MIT Lincoln Laboratory may have discovered a more reliable solution.

Instead of searching for visible imperfections, their new system focuses on biological signals in human faces.

These signals are extremely difficult for AI systems to reproduce accurately.

During testing, the system achieved 99.1% detection accuracy, even against some of the most advanced deepfake generators available.

The research was supported through programs such as the DARPA Media Forensics Program.

How the New Detection Technology Works

The new approach analyzes subtle patterns that occur naturally in real human faces.

These biological signals are governed by physics and human physiology, making them extremely difficult for AI to mimic perfectly.

The system studies several key features.

1. Subtle Blood Flow Changes

Human skin shows tiny color variations caused by blood flow beneath the surface.

These shifts are almost invisible to the human eye.

However, the detection system can analyze these micro-signals and identify patterns that fake videos often fail to reproduce.

2. Eye Reflection Patterns

Another signal involves light reflection in the eyes.

The system examines how light interacts with the white part of the eye based on the surrounding lighting environment.

Real lighting follows physical rules, while synthetic videos often struggle to match these reflections perfectly.

3. Natural Facial Muscle Coordination

Human facial muscles move in coordinated ways when a person speaks or shows emotion.

These movements follow strict biological and biomechanical rules.

Deepfake systems may replicate expressions visually, but they often fail to reproduce the underlying physical behavior accurately.

Testing Against Advanced Deepfake Generators

To evaluate the system, researchers tested it against 15 advanced deepfake generation tools.

The results were impressive.

The AI detector maintained over 98% accuracy across all samples.

These findings suggest that detection technology may finally be catching up to deepfake creators.

The study was published in IEEE Transactions on Information Forensics in 2025.

Why This Technology Matters

Reliable deepfake detection could transform several critical industries.

For example:

Journalism

News organizations need tools to verify whether videos are authentic before publishing them.

Legal Systems

Courts increasingly rely on digital evidence. Detecting manipulated videos helps prevent false evidence from influencing legal decisions.

Election Security

Political deepfakes can spread rapidly during elections. Detection systems could help identify fake campaign videos before they influence voters.

Online Safety

Improved detection tools may help reduce harmful deepfake abuse, particularly non-consensual intimate content.

The Ongoing Battle Between AI Creators and Detectors

Despite this breakthrough, experts believe the technological race will continue.

As detection systems improve, deepfake creators will likely develop new techniques to bypass them.

Therefore, the future of digital security may depend on constant innovation in media forensics.

Nevertheless, this new approach shows that focusing on biological and physical signals could provide a powerful defense against synthetic media.

Frequently Asked Questions (FAQs)

What is a deepfake?

A deepfake is a synthetic video, image, or audio clip created using artificial intelligence to make it appear as though a real person said or did something they never actually did.

Why are deepfakes dangerous?

Deepfakes can spread misinformation, damage reputations, manipulate elections, and enable online abuse through realistic fake content.

How accurate is the new MIT deepfake detection system?

The new system achieved 99.1% accuracy during testing against advanced deepfake generators.

Can deepfakes be completely stopped?

Probably not entirely. However, improved detection tools and regulations can significantly reduce their harmful impact.

Final Thoughts

Deepfake technology represents one of the most complex challenges created by modern artificial intelligence.

While AI can generate extremely realistic fake videos, new detection systems are becoming equally advanced.

The breakthrough from MIT Lincoln Laboratory demonstrates that analyzing biological signals may provide a powerful new defense against manipulated media.

As deepfakes continue to evolve, innovations like this will play a crucial role in protecting trust in digital information.